- #AIRFLOW HELM CHART HOW TO#

- #AIRFLOW HELM CHART SOFTWARE#

- #AIRFLOW HELM CHART CODE#

- #AIRFLOW HELM CHART FREE#

The examples will be AWS-based, but I am sure that with little research you can port the information to any cloud service you want or even run the code on-prem. Assuming that you know Apache Airflow, and how its components work together, the idea is to show you how you can deploy it to run on Kubernetes leveraging the benefits of the KubernetesExecutor, with some extra information on the Kubernetes resources involved ( yaml files). And that is the main point of this article.

#AIRFLOW HELM CHART HOW TO#

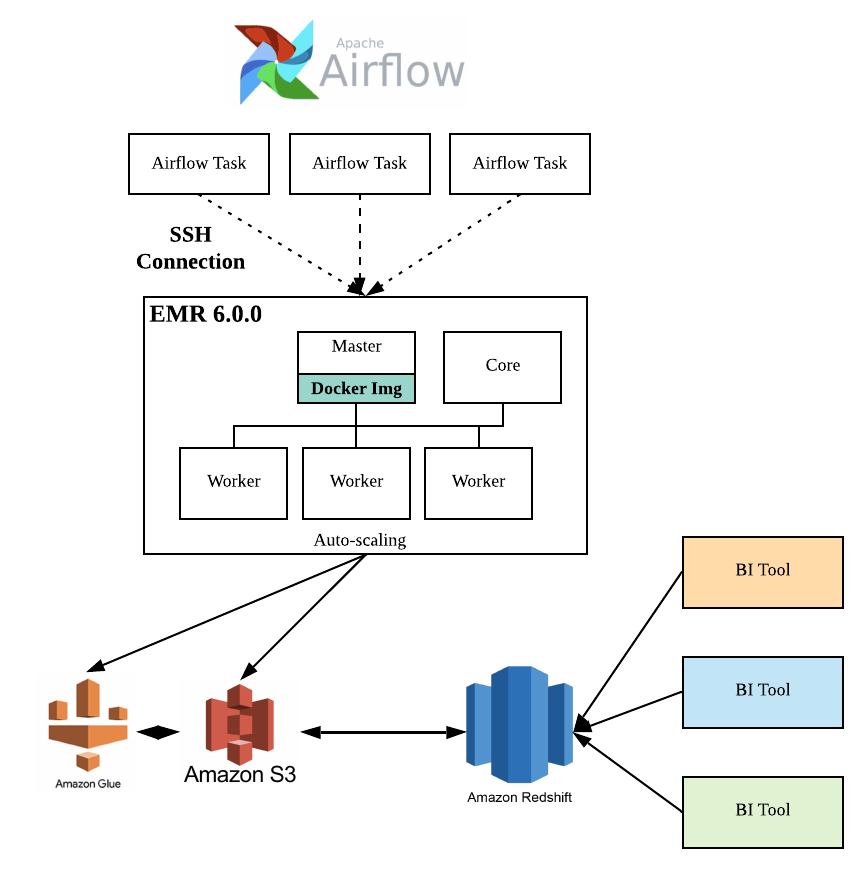

I found a lot of people talking about the benefits of running Airflow on Kubernetes, the architecture behind it and a bunch of Helm charts, but little information on how to deploy it, piece by piece, in a logical way for a Kubernetes beginner. I searched on the internet, from the official Apache Airflow documentation to Medium articles, digging for information on how to run a simple implementation of Airflow on Kubernetes with the KubernetesExecutor (I was aware of the CeleryExecutor existence, but it would not fit our needs, considering that you need to spin your workers upfront, with no native auto-scaling). At that point, we were migrating all our platforms to run on a Kubernetes cluster, so why not Airflow? We needed a way to scale the application horizontally and, more than that, to upscale it considering the peak hours and to downscale it at dawn to minimize needless costs. There are dozens of tasks being created every day and suddenly we would be running on an r4.16xlarge instance. Our first approaches were to scale vertically to an r4.large instance, and then to an r4.xlarge, but the memory usage was constantly increasing. It was obvious that we would need to scale our application from an AWS t2.medium EC2 instance to something more powerful. Most of them are Apache Spark / Hive jobs scheduled by business analysts or data analysts (source: author) Why Kubernetes? Piece of a giant DAG with more than 1000 tasks. But this, unfortunately, is a topic for a future article. to run my task I depend on the tables orders and users), and voilà, you have your task scheduled to run daily.

#AIRFLOW HELM CHART SOFTWARE#

At the end, it does not matter if you are a software engineer with years of experience or a business analyst with minimal SQL knowledge, you can schedule your task using our platform writing a yaml file with three simple fields: the ID of your task, the path of the file containing your queries and the name of its table dependencies (i.e. It underwent a lot of changes since then, from a simple tool to serve our team’s workload to a task scheduling platform to serve the more than 200 people with a lot of abstractions on the top of it.

Our first implementation was really nice, based on docker containers to run each task in an isolated environment.

We are using Airflow in iFood since 2018. Also, it makes your workflow scalable to hundreds or even thousands of tasks with little effort using its distributed executors such as Celery or Kubernetes.

You can even create your own operators from scratch, inheriting from the BaseOperator class. These operators are python classes, so they are extensible and could be modified to fit your needs. Contributor operators ⁷ are also available for a great set of commercial tools and the list keeps growing every day. It has plenty of native operators (definitions of task types) ⁶ that integrate your workflow with lots of other tools and allow you to run from the most basic shell scripts to parallel data processing with Apache Spark, and a plethora of other options. You can create tasks and define your task dependencies based on variables or conditionals. Long story short, its task definitions are code based, what means that they could be as dynamic as you wish. For that matter, please check these other posts. ¹ ² ³ Delve into Airflow concepts and how it works is beyond the scope of this article.

#AIRFLOW HELM CHART FREE#

But if you are not willing to just accept my words, feel free to check these posts. My humble opinion on Apache Airflow: basically, if you have more than a couple of automated tasks to schedule, and you are fiddling around with cron tasks that run even when some dependency of them fails, you should give it a try. When the Apache Airflow task landing chart looks more like a Pollock’s painting (source: author) A brief introduction